However, it does come with a significant shift in mindset if you’re accustomed to writing imperative code with variable reassignment. Immutability has many benefits to program design: thread safety, functions without side effects, and encouraging concise compartmentalization.

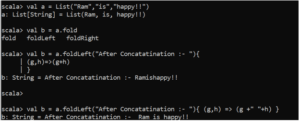

基本函数 val bigData = List( "Hadoop", "Spark") val data = List( 1, 2, 3) val bigData_Core = "Hadoop" :: ( "Spark" :: Nil) val data_Int = 1 :: 2 :: 3 :: Nil println(data.isEmpty) println(data.head) println() val List(a,b) = bigData println( "a : " a " = " " b: " b) val x :: y :: rest = data println( "x : " x " = " " y: " y " = " rest ) val shuffledData = List( 6, 3, 5, 6, 2, 9, 1) println(sortList(shuffledData)) def sortList (list : List): List = list match println(mergedsort((x: Int, y: Int) => x x > y) _ println(reversed_mergedsort(List( 3, 7, 9, 5))) Map/ Filter函数 println(List( 1, 2, 3, 4, 6).map(_ 1)) val data = List( "Scala", "Hadoop", "Spark") println(data.map(_.length)) println(data.map(_.)) println(data.map(_.toList)) println(data.flatMap(_.toList)) println(List.range( 1, 10).flatMap(i => List.range( 1, i).map(j => (i, j)))) var sum = 0 List( 1, 2, 3, 4, 5).foreach(sum = _) println( "sum : " sum) println(List( 1, 2, 3, 4, 6, 7, 8, 9, 10).filter(_ % 2 = 0)) println(data.filter(_.length = 5)) Partition/ Find/ Takewhile println(List( 1, 2, 3, 4, 6).map(_ 1)) val data = List( "Scala", "Hadoop", "Spark") println(data.map(_.length)) println(data.map(_.)) println(data.map(_.toList)) println(data.flatMap(_.toList)) println(List.range( 1, 10).flatMap(i => List.range( 1, i).map(j => (i, j)))) var sum = 0 List( 1, 2, 3, 4, 5).foreach(sum = _) println( "sum : " sum) println(List( 1, 2, 3, 4, 6, 7, 8, 9, 10).filter(_ % 2 = 0)) println(data.filter(_.length = 5)) FoldLeft/ FoldRight //foldLeft 等同于 /: (.4 ( 3 ( 2 ( 0 1)).) println(( 1 to 100).foldLeft( 0)(_ _) ) println(( 0 /: ( 1 to 100))(_ _)) //foldRight 等同于 :\ ( 1-( 2-( 3-( 4-( 5- 100)))) println(( 1 to 5).foldRight( 100)(_-_)) println((( 1 to 5):\ 100)(_-_)) println(List( 1, - 3, 4, 2, 6).sortWith(_ _)) Apply/ Make /Range println(List.apply( 1, 2, 3)) println(List.make( 3, 5)) //List( 5, 5, 5) println(List.range( 1, 5)) println(List.range( 9, 1, - 3)) //List( 9, 6, 3) val zipped = "abcde".toList zip List( 1, 2, 3, 4, 5) println(zipped) println(zipped.unzip) //合并 println(List(List( 'a', 'b'), List( 'c'), List( 'd', 'e')).flatten) println(ncat(List(), List( 'b'), List( 'c'))) println(List.map2(List( 10, 20), List( 10, 10)) (_ * _)) //List( 100, 200) 其他集合 Buffer //ListBuffer import val listBuffer = new ListBuffer listBuffer = 1 listBuffer = 2 println(listBuffer) //ArrayBuffer import val arrayBuffer = new ArrayBuffer() arrayBuffer = 1 arrayBuffer = 2 println(arrayBuffer) Queue //不可变的Queue import val empty = Queue() val queue1 = empty.enqueue( 1) val queue2 = queue1.enqueue(List( 2, 3, 4, 5)) println(queue2) val (element, left) = queue println(element " : " left) //可变的Queue import val queue = Queue() queue = "a" queue = List( "b", "c") println(queue) println(queue) //head :Queue(element) println(queue) Stack import val stack = new Stack stack.push( 1) stack.push( 2) stack.push( 3) println(stack.top) // 3 stack( 3, 2, 1) println(stack) println(stack.pop) // 3 stack( 2, 1) println(stack) Set/ TreeSet val data = Set.empty data = List( 1, 2, 3) data = 4 data -= List( 2, 3) println(data) data = 1 println(data) data.clear println(data) //升序排序 val treeSet = TreeSet( 9, 3, 1, 8, 0, 2, 7, 4, 6, 5) println(treeSet) val treeSetForChar = TreeSet( "Spark", "Scala", "Hadoop") println(treeSetForChar) Map/ TreeMap val map = Map.Scala is rooted in functional programming, which emphasizes immutability: the inability of a variable or object to change its state.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed